Is this post for you?

Before we get into the definition, here is a quick check.

You have invested in multiple systems - some collection of ERP, PLM, DAM, MAM, CMS, PIM, regulatory databases, supplier portals, and others. Some of those systems have been in place for years. They hold real value: product knowledge, content assets, compliance records, insights, domain expertise that took decades to accumulate.

And yet:

- You still can't get a straight answer about your products in one hit.

- Your AI initiatives keep hitting the same wall - the information underneath them isn't reliable enough to trust the outputs.

- Your subject matter experts spend too much of their time reconciling data across systems instead of doing the work only they can do.

- Every new regulatory requirement lands like a crisis.

- You can't move at the speed you want to move, because launching something that draws on everything you know requires a manual assembly project, not a configuration exercise.

If that is your situation then this post is for you, because what you are describing is not a systems problem, it is a meaning problem, and an information backbone is what resolves it.

Bottom line up front:

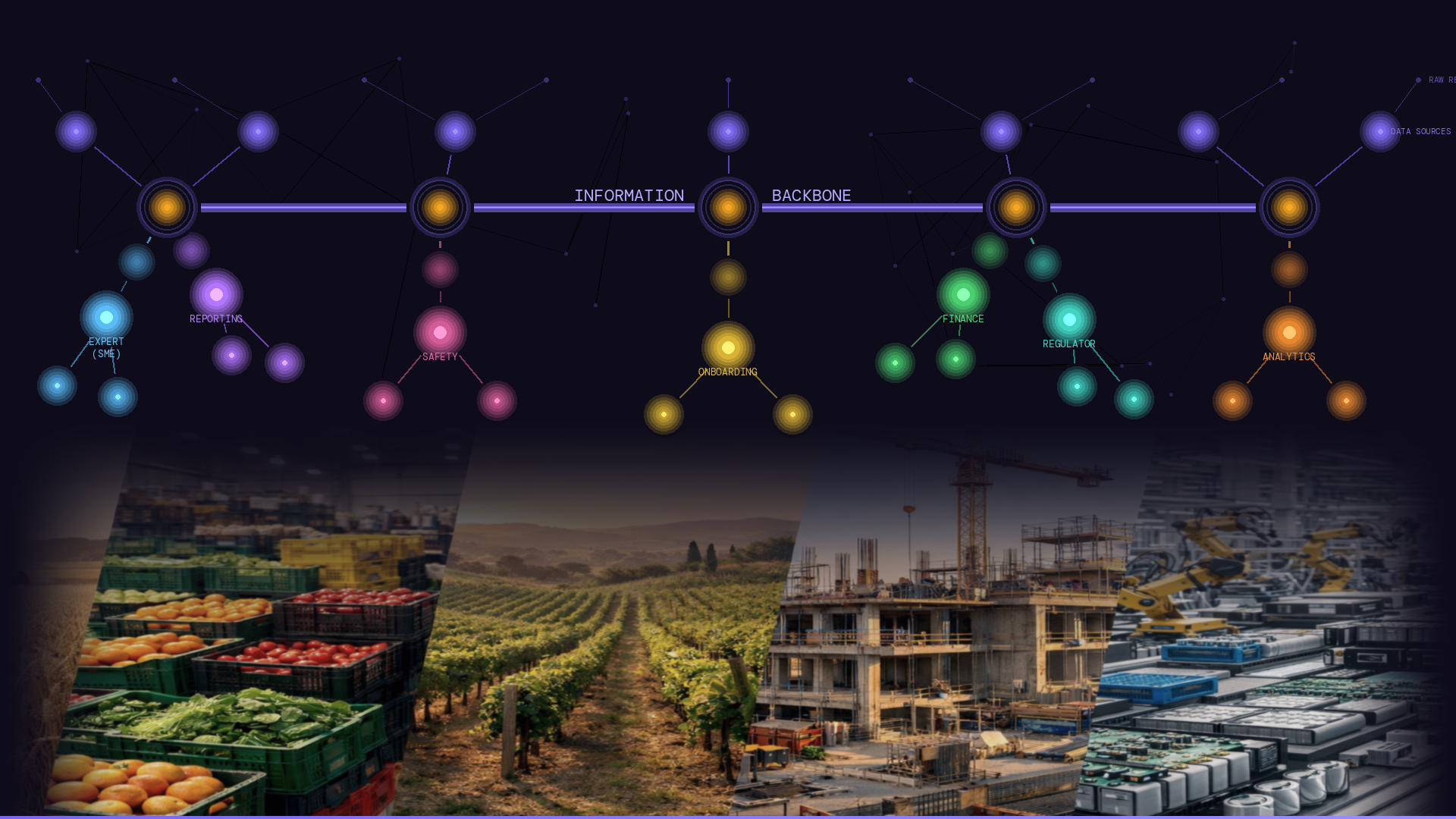

An information backbone is the place - in your organization's systems - where a human or a machine can go to answer a specific and important question, without having to guess, pattern-match, or cross-reference with a separate system. It gives certainty and context, and it is the structural foundation upon which everything else - your AI, your APIs, your regulatory outputs, your partner integrations - should be built.

What it is not

It helps to start with what an information backbone is not:

It is not a data lake. A data lake stores information at scale. It does not govern meaning. You can have a very full data lake and still have no authoritative answer to the question "what does this product contain, and what regulations apply to it?"

It is not an API gateway. An API gateway manages how systems connect and communicate. It does not define what the information being exchanged actually means. Two systems can be perfectly connected and still disagree about the definition of "material."

It is not a reporting layer. Reports are outputs. A backbone is what makes those outputs trustworthy. Building better reports on top of ungoverned information does not fix the underlying problem - it just produces well-formatted uncertainty.

And it is not a label or a document generation service bolted onto the end of your process. This is perhaps the most common mistake, and it is worth naming directly. Organizations under pressure to produce compliant outputs - whether for partners, regulators, or new digital requirements - often reach for a service that generates those outputs from whatever information they already have. The output looks right. But the underlying information hasn't changed. The meaning hasn't been governed. The next time a question is asked - by a different system, a different partner, a different regulatory authority - the process begins again from scratch, with the same fragmented foundation.

The backbone is not at the end of the process, it is at the beginning.

A plain-language definition

Here is how I describe it when I want to be precise, steering clear of technologies and technical terms:

An information backbone is a shared, governed model of what your organization knows - structured so that the relationships between things are explicit, the definitions are controlled, and the reference data is maintained. It is the place where context and meaning live.

Think of it less like a filing system and more like a navigational chart. A filing system stores things, whereas a chart tells you exactly where everything is, how it relates to everything else, and what it means to move from one place to another. When you need to answer a question - about a product, a component, a regulatory requirement, a supplier relationship - the chart gives you a certain answer, not a probable one. You do not have to triangulate across three systems to find it. You do not have to ask a subject matter expert to interpret it. The meaning is already there, built into the structure.

That certainty is the operational value of a backbone. Not just consistency - certainty.

What it actually resolves

Beyond the simple definition, here are some of the common problems that an information backbone resolves, to help sharpen the picture:

"We have the data. We just can't trust it." An organization has invested heavily in systems and still feels exposed. The backbone resolves this not by adding more data, but by governing the meaning of the data you already have. When meaning is defined explicitly - in the model, not assembled at runtime - the outputs become defensible. You can trace every answer to its governed source.

"Every AI project hits the same wall." The wall is not because of the AI, it is the lack of foundation beneath it. AI tools consume meaning; if that meaning is inconsistent, ungoverned, or assembled at query time from fragmented sources, the outputs will be unreliable. A governed backbone gives AI something certain to work from - and makes AI tools interchangeable, because the meaning lives in the backbone, not in the tool.

"Our subject matter experts spend half their time on reconciliation." When meaning is fragmented across systems, the people who understand the domain become the translation layer between them. That is an expensive, invisible, and entirely avoidable tax on your most knowledgeable people. The backbone removes it - by holding meaning once, governed by the experts who understand it, and making it available to every system that needs it.

"We cannot launch a product that leverages all of our information - it is too fragmented." This is the commercial consequence of fragmented meaning. The assets and knowledge exist, but because different parts of the estate hold different versions of what things mean, assembling them into a coherent product or experience requires manual effort that makes speed impossible. This is a horizontal information silo problem. The backbone is what makes your information estate a coherent, launchable asset rather than a collection of siloed repositories.

"Our end-to-end information supply chain is not joined-up." As well as information being silo'd horizontally across multiple single-purpose systems, there are also is a common information supply chain issue when data is not joined throughout the information supply chain. This means you have issues answering questions that span the chain, from early R&D or commissioning through to end use scenarios.

a) This might be due to boundary degradation across steps in the supply chain; brittle legacy connections lose data when their caretakers move onto new jobs, when reference data gets out of sync, or when model updates (regulatory or in-house) are not cascaded reliably.

b) It might be due to manual processes that require humans to move context across boundaries as you step through the chain, leaving no machine-readable context or meaning.

The symptoms of this are the same either way: You cannot get a good answer to a specific question that looks across your supply chain in one hit. Instead you have to go hunting and assembling!

"Every new regulatory requirement feels like a crisis." When machine-readable compliance outputs have to be assembled from systems that were never designed to produce them, every new mandate is a program of work. The backbone changes this: if your information is already held in a governed, machine-readable form on an information backbone, regulatory changes can be as simple as a reference data change, and even when they require a model update, a governed backbone is by its nature the most reliable and efficient means through which to make the change because machines understand it, and machines can update it.

"We're locked into our current vendors because everything is entangled." When AI tools or platform vendors are deeply entangled with how your information is stored and interpreted, switching them means unpicking that entanglement. A good current example of this is MCP tools and agent skills being configured with domain knowledge to handle the idiosyncrasies of a specific LLM; without an information backbone, this breaks when you change LLMs. A backbone separates meaning from tooling, and importantly the governed model lives independently of any vendor. Switching providers can happen seamlessly against the APIs that provide the model in a machine-readable form, and not require a new articulation of domain context and meaning.

Important caveat: a backbone does not resolve every information problem. It does not

- automatically clean dirty data,

- replace legacy systems, or

- eliminate the need for change management.

What it does is provide the governed foundation, so that you can size-up those problems, and work through them.

I hope the above provides enough context and meaning to clarify the definition.

Next in this series:

Post 5: The quiet power of reference data - the unglamorous work that gives your backbone a stable vocabulary, and why getting it wrong undermines everything else.

Post 6: The characteristics of an information backbone - When you take a closer look at an information backbone, what should you see? The answer is a solid set of characteristics, focused on its foundational purpose.

Bonus Post: Build or buy your information backbone? Why the true cost of building a governed information backbone for a high-trust environment is almost always underestimated - and what that means for your build vs buy decision.

Previously in The COO's Machine-Readable Information Backbone series:

Post 1: Preparing for true machine-readable digital product labels - Machine readability is a meaning problem, not a format problem. Most organizations focus on file formats and miss the foundational architecture problem entirely. This is what it actually demands from your organisation.

Post 2: Machine-readable isn't just for machines - Better information architecture foundations improve the experience of the humans who work with product data every day - your SMEs, compliance teams, and end users. Better foundations for machines are better foundations for people.

Post 3: Why AI needs stable meaning - AI operating on ungoverned data is making guesses, and in regulated environments that isn't good enough. A proper information backbone eliminates hallucination for the facts that matter, and gives COOs the freedom to swap AI providers without operational friction.