At some point in almost every serious information backbone conversation, the question comes up: could we build this ourselves?

It is a reasonable question. Your organization has engineering capability. You have existing systems to integrate with. You understand your own domain better than any vendor does. And there is an understandable instinct - particularly in regulated sectors where information is a competitive and compliance asset - to keep strategic infrastructure in-house.

This post works through that question honestly. The answer is not always "buy." But the conditions under which "build" is the right answer are narrower than most organizations initially believe - because the true cost of building a governed information backbone, purpose-built for high-trust environments, is almost always underestimated.

Bottom line up front:

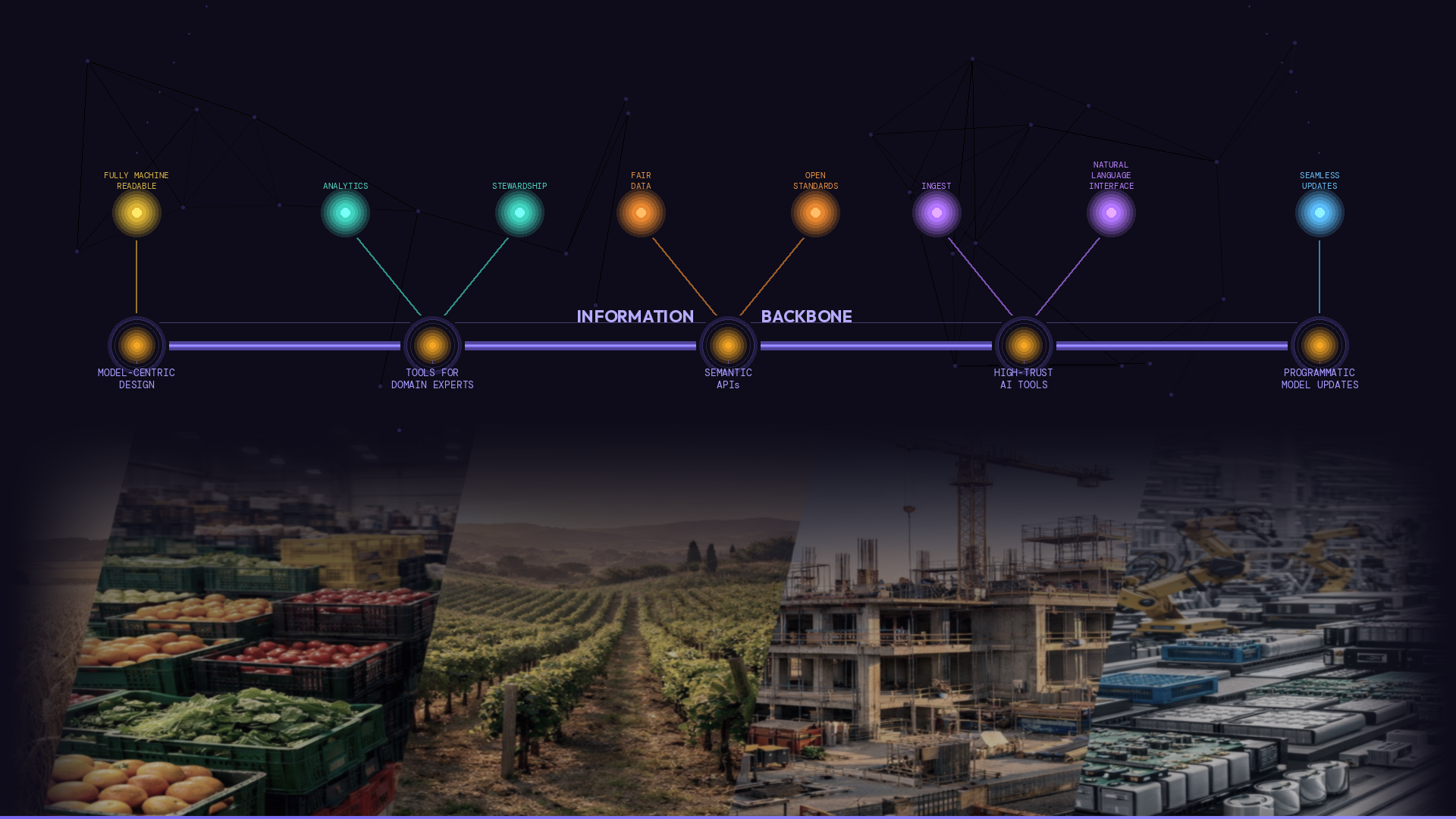

Building a governed information backbone is not a data engineering project. It is a sustained investment in model-centric infrastructure, SME-native tooling, semantic APIs, and operational model governance - including the ability to update your information model programmatically as your domain, regulations, and organization evolve. The organizations that underestimate this do not just overspend. They end up with something that cannot do the job.

What you are actually building

The first mistake in most build estimates is a failure to define the scope accurately. When organizations say "we could build this," they typically have in mind something like: a graph database, a data model, some APIs, and a front end for SMEs to interact with. That is the visible part. It is not the hard part.

A governed information backbone for a regulated, high-trust environment has to do several things that are easy to underestimate individually, and genuinely demanding in combination:

- Model-centric design, not just data storage. The backbone does not just store information - it governs meaning. That means entities, explicit relationships, controlled vocabularies, and reference data that map to how your domain actually works. Designing this well requires deep domain engagement and an information architecture discipline that is distinct from data engineering.

- SME-native tooling. The backbone only works if the people who understand the domain can maintain it without IT involvement. Building tooling that exposes the information model to subject matter experts - in a form they can actually use, that reinforces governance rather than undermining it - is a UX and information design challenge that most engineering teams underestimate significantly. It typically gets built, rebuilt, and rebuilt again.

- Semantic APIs, not just data APIs. An API that exposes data fields is straightforward to build. An API that reflects the governed information model - expressing relationships, classifications, and shared meaning in a form that partner systems and regulatory platforms can consume directly - is a different proposition. It requires the model to be sound before the API can be sound.

- Infrastructure for a regulated environment. Graph database hosting, scaling, security, access controls, versioning, backup, failover, and auditability do not come free. In regulated sectors, the infrastructure requirements are not optional - they are the baseline.

- High Trust AI. Al on ungoverned data has to guess. In regulated environments that is not good enough. A properly structured information backbone eliminates gap-filling with a tightly defined model, well governed reference data, and machine-readable, tightly validated instance data.

- Operational model governance. The backbone is not a build-once asset. Your information model will change: new regulatory requirements, product portfolio evolution, domain expansion, reference data updates. The tooling and processes to manage those changes in a controlled, traceable way - without breaking downstream systems - are themselves a significant engineering and governance challenge.

The cost dimension that surprises most organizations: model updates

Of all the dimensions above, the one that most consistently surprises organizations in the build analysis is the last one: ongoing model governance, and in particular the cost and operational risk of updating the information model as things change.

In a regulated environment, your model is not static. Regulations change. Product categories evolve. New substances get classified. Reference data standards are updated. An organization operating across multiple jurisdictions or product lines will face model change as a recurring operational reality, not an occasional exception.

When you build your own backbone, every model change is a program of work. Engineering effort to update the schema. Impact analysis to understand what downstream systems are affected. Testing to verify that the change has propagated correctly. Coordination with the teams whose systems depend on the model. This is not a problem that goes away as the backbone matures - it is a structural property of in-house builds that do not have model change designed in from the outset.

Data Graphs approaches this differently. The platform supports programmatic model updates via API - meaning that model changes can be structured, versioned, and executed through a controlled programmatic interface, rather than requiring bespoke engineering effort each time. Reference data changes, relationship updates, new classification schemes: these are operations the backbone is designed to handle, not exceptions that require a project to manage.

For organizations operating in fast-moving regulatory environments, this is not a marginal efficiency gain. It is the difference between an information backbone that remains current - and therefore trustworthy - and one that drifts behind the operational reality it is supposed to govern.

The hidden cost of the SME tooling problem

There is a second cost dimension that deserves specific attention, because it tends to be systematically underestimated in build proposals: the cost of building tooling that domain experts can actually use.

It is easy to specify. Subject matter experts need a way to work with the information model - to populate it, validate it, maintain reference data, and manage classifications - without requiring IT involvement for routine operations. The spec is clear. The build is not.

Building tooling that genuinely puts SMEs in control of the governed model requires the tooling to be designed around the information model itself - not around what a development team found natural to build, and not around a generic data management UI that has been adapted. When this sequencing is reversed - when the UX is built before the model is sound - the tooling embeds assumptions about meaning that the model then has to contort itself to accommodate. The result is a system that sits one step away from the backbone it was supposed to expose.

Most organizations that have attempted to build SME tooling for an information backbone have built it more than once. The first version does not survive contact with real domain experts working at scale. The second is better. The third is usually when the scope of the challenge becomes clear. That iteration cost is rarely in the original build estimate.

A Total Cost of Ownership (TCO) comparison across the key dimensions

This section maps the primary cost dimensions against the build and buy options. The intent is not to present a specific financial model - costs vary significantly by organization, scale, and domain - but to make the comparison across dimensions explicit.

Cost dimension example: Build in house, vs Buy Data Graphs

Model-centric design & storage

- Build in-house: Significant: graph data model engineering, schema design, governance tooling from scratch

- Data Graphs: Included: purpose-built, governed model infrastructure ready to configure

Model-centric UI for SMEs

- Build in-house: High: custom UX development to expose the model to non-technical users - typically underestimated and rebuilt multiple times

- Data Graphs: Included: SME-native tooling designed around the information model from the outset

API surface (semantic, not just data)

- Build in-house: High: APIs that reflect meaning - not just field access - require significant architectural investment to design and maintain

- Data Graphs: Included: backbone APIs expose governed semantics as a standard capability

Infrastructure & hosting

- Build in-house: Ongoing: graph database licensing, scaling, security, uptime, backup, failover - a specialist infrastructure burden

- Data Graphs: Included in subscription: managed, scalable, enterprise-grade

Model updates & evolution

- Build in-house: High risk: model changes require coordinated engineering effort; downstream system impacts must be manually mapped and tested

- Data Graphs: Low risk: programmatic model updates via API - changes are structured, versioned, and propagated by design

Reference data management

- Build in-house: Manual: loading, governing, and maintaining shared vocabularies and external standards requires dedicated tooling and process

- Data Graphs: Structured: reference data is a first-class concern in the platform, not an afterthought

Regulated environment readiness

- Build in-house: Expensive: auditability, traceability, version history, and defensible outputs require deliberate - and costly - engineering

- Data Graphs: By design: the platform is built for environments where accuracy and traceability are non-negotiable

The pattern across these dimensions is consistent: in a build scenario, each line item requires dedicated engineering, architecture, or operational investment. In a Data Graphs deployment, each is a platform capability that is configured, not built - with the exception of the domain-specific model work, which requires genuine subject matter expertise regardless of the path taken. It takes time and resources to reach parity, while you could be enjoying new features from a "Buy" option.

The question to ask before you decide

The most useful framing for the build vs buy decision is not "can we build this?" - the answer to that question is almost always yes, in principle.

The more useful question is:

"What would we need to maintain in perpetuity to keep this trustworthy - and is that the best use of our engineering capacity?"

A governed information backbone in a high-trust environment is not a system you build and hand over. It is an operational capability that requires ongoing investment: in model governance, in reference data management, in tooling that keeps SMEs productive, and in the ability to update the model as the world changes. Every one of those demands is a permanent claim on engineering and governance resources. For most organizations, those resources are better directed at the domain knowledge and product differentiation that only they can build - not at recreating infrastructure that already exists as a mature, purpose-built platform.

Data Graphs is built to make that total cost manageable - not by removing the domain expertise requirement, which cannot be outsourced, but by providing the infrastructure, the tooling, and the model governance capability that would otherwise have to be engineered from scratch, and maintained indefinitely.

The backbone itself is the investment that compounds. The platform that hosts it should not be.

Simple Steps for COOs

- Before any build estimate, ask for the ongoing cost, not just the build cost. Request a breakdown that includes model governance, SME tooling iteration, infrastructure operations, and the cost of model updates over a three-year horizon. If the estimate covers only the initial build, it is not a complete picture.

- Test the model update assumption directly. Ask your engineering team: if a regulatory requirement changed the classification scheme for a key product category, how long would it take to update the model and propagate that change to every downstream system? The answer to that question tells you whether model change has been designed in - or designed around.

- Apply the SME tooling test before committing. Before signing off on a build approach, ask who will maintain the information model when the initial engineering team moves on. If the answer requires IT involvement for routine updates, the tooling has not been built for the people who need to use it. That gap will not close on its own.

For more on this topic, have a look at our "Machine-Readable Information Backbone" series.

Part of The COO's Machine-Readable Information Backbone series:

Previously in The COO's Machine-Readable Information Backbone series:

Post 1: Preparing for true machine-readable digital product labels - Machine readability is a meaning problem, not a format problem. Most organizations focus on file formats and miss the foundational architecture problem entirely. This is what it actually demands from your organisation.

Post 2: Machine-readable isn't just for machines - Better information architecture foundations improve the experience of the humans who work with product data every day - your SMEs, compliance teams, and end users. Better foundations for machines are better foundations for people.

Post 3: Why AI needs stable meaning - AI operating on ungoverned data is making guesses, and in regulated environments that isn't good enough. A proper information backbone eliminates hallucination for the facts that matter, and gives COOs the freedom to swap AI providers without operational friction.

Post 4: What is an information backbone? A plain-language definition for operational leaders - Written for organizations that already have systems, already have data, and are still asking why none of it feels reliable.